|

This example requires TensorFlow Addons, which can be installed using the following This implementation covers (MAE refers to Masked Autoencoder):Īs a reference, we reuse some of the code presented in

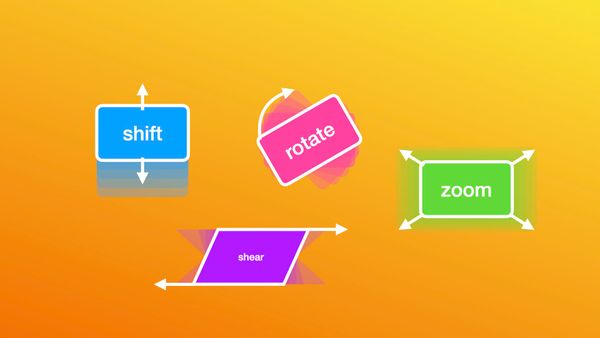

Pretraining a scaled down version of ViT, we also implement the linear evaluation In the spirit of "masked language modeling", this pretraining task could be referred They mask patches of an image and, through an autoencoder predict the masked patches. The pretraining algorithm of BERT ( Devlin et al.), the authors propose a simple yet effective method to pretrain large

Masked Autoencoders Are Scalable Vision Learnersīy He et. In the field of natural language processing, theĪppetite for data has been successfully addressed by self-supervised pretraining. In deep learning, models with growing capacity and capability can easily overfit Author: Aritra Roy Gosthipaty, Sayak Paulĭescription: Implementing Masked Autoencoders for self-supervised pretraining.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed